We recently worked with a bilingual church that wanted to provide English subtitles of a Japanese language service. Not knowing Japanese, we decided to personally run spf.io for a month to experience using it when you don’t know the spoken language.

Using the autopilot feature, we didn’t need to know the language in order to operate the technology. We just set the captioner to listen for Japanese, enabled autopilot, and spf.io automatically sent out captions and subtitles when the speaker naturally paused in speech.

The “Rare Pause” Problem

However, because the speaker would talk quickly without pausing for long stretches, the experience for people receiving translation felt choppy at times. Seconds would pass with no subtitles until the speaker paused naturally in their speech, and then a large chunk of captions and translations would be released all at once. Users could lag behind the speaker by several sentences, which is similar to what you might experience with amateur simultaneous interpretation.

To reduce this lag time, we clicked the caption “line-break” button whenever we felt like the speaker took a breath or had a quiet moment in the flow of their speech. This meant captions were being released much quicker, which made the translation feel more responsive and in sync. Unfortunately, we could not time these manual line breaks perfectly, which meant translation quality went down.

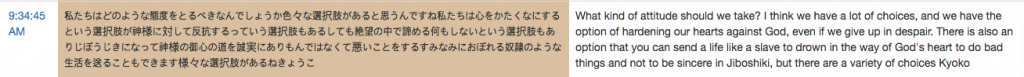

Let’s take one Japanese sermon as an example. On autopilot, the sermon was released as 46 utterances (~1 utterance/60 seconds), but with manual line breaks the sermon became a much smoother 226 utterances (~1 utterance/12 seconds). You can see in Example 1a below that the autopilot version had very long chunks of text with translation that was more understandable. The manual line break version (Example 1b) is easier to consume during a service because you get something new to read very often, but it is also less understandable for Japanese to English.

So, can you control spf.io without knowing the spoken language?

Yes, thanks to autopilot.

But as you can see, there is a trade-off between responsiveness and translation quality. Autopilot produces captions and translations with less “noise”, but during a service, it feels less responsive compared to the experience with manual line-breaks.

So what can we do to make the best experience possible?

3 Solutions

#1: The Speaker Prepares a Manuscript

The highest quality solution leverages preparation. If a speaker writes a manuscript and uploads it to spf.io in advance, the text can be automatically translated ahead of time. Then the controller can release subtitles for an utterance exactly as the speaker says it, resulting in a magical experience for the audience. Scripts should be preferred whenever possible because of the excellent audience experience they provide, but when they are not feasible, you can try the following approaches.

#2: The Speaker Pauses in Their Speech More Often

On autopilot, spf.io waits for a speaker to pause to finalize the caption and release it to the audience. By pausing more frequently a speaker naturally improves the quality of the speech recognition and hence the translation. It may also help their native language listeners keep up too!

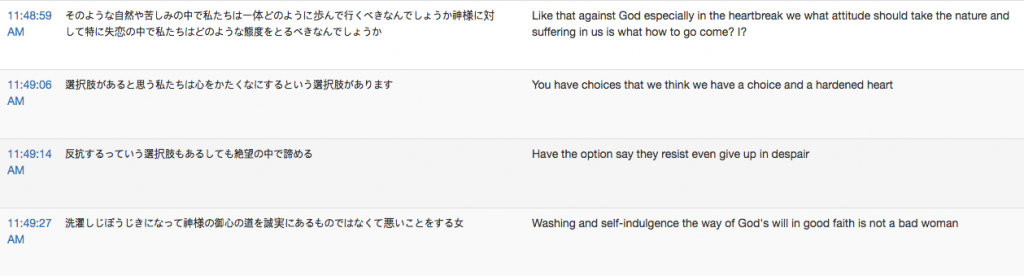

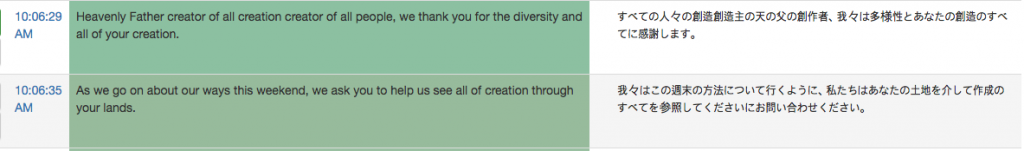

In the same way that a speaker would give a human interpreter time to speak with consecutive interpretation, a 1-2 second pause between sentences is all that spf.io needs to send out a translation. Spf.io on autopilot will be able to send out smaller chunks of text more often as a result. The example below shows the improved flow when the speaker paused between sentences, such as in times of prayer:

#3: The Operator Understands the Spoken Language

Finding someone who understands the spoken language is another way to improve the audience experience. Because they know the nuances and flow of the language, they can manually line break the captioner at appropriate times while monitoring its output. If they see any glaring transcription errors (such as with names, phrases from other languages, and mumbled speech), they can correct the captioner’s output before it is released. This solution helps with quality assurance and gives you peace of mind. It also has the side-benefit of creating training data for the captioner to help it avoid future errors.

This person does not need to be bilingual, but they must be able to listen to and read the source language simultaneously as well as type out corrections. Thankfully, finding someone monolingual who can read and type is often easier than finding a skilled bilingual interpreter.

Let Autopilot Run

We were very excited to run spf.io without any knowledge of Japanese. Though far from perfect, the automatic English translation we received live during the service often gave us the gist of the preacher’s message and it was great to go from zero understanding to being encouraged by the exhortation to “trust God in the wilderness” expounded from the book of Hebrews.

If you wish to reach multilingual audiences, you will find that preparing scripts, pausing intentionally, and asking an assistant to operate spf.io can help improve the experience. Even in cases where autopilot is sufficient, having someone monitor spf.io is important because it ensures your audience is receiving the best experience possible.

When the solutions suggested above aren’t feasible, the best option is to simply let autopilot run. Without knowledge of the spoken language, mistimed line breaks without corrections make it very hard for the audience to comprehend the translation. While the flow of subtitles won’t be as smooth (i.e. there will be longer delays between the speaker’s words and the appearance of subtitles), having comprehensible translation is preferable.

It takes thoughtful work to create a great multilingual experience, but the the joys of being part of a multilingual community and connecting across the language barrier make it worthwhile. We built spf.io to help you experience those benefits and grow. If this fits with your organization’s dreams and needs, contact us to get started!