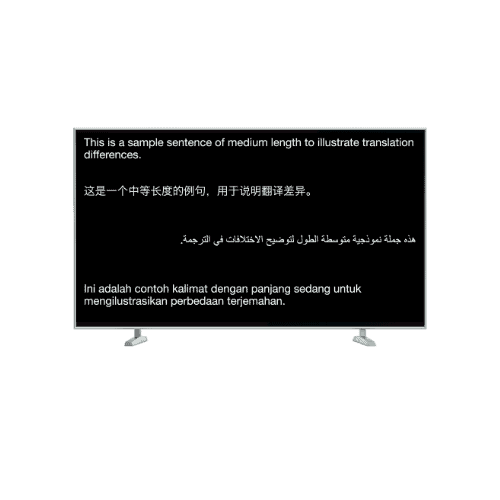

Showing real time subtitles in multiple languages is a tricky problem. Different languages have different lengths of translation. For example, Chinese tends to be compact, English is in the mid-range and languages like Indonesian tend to be longer in display length. Here’s an illustrative sample:

| Language (Length in characters) |

Text (Note: these translations are automatically generated without human review for the sake of illustration)

|

|---|---|

| English (81) | This is a sample sentence of medium length to illustrate translation differences. |

| Simplified Chinese (21) | 这是一个中等长度的例句,用于说明翻译差异。 |

| Indonesian (92) | Ini adalah contoh kalimat dengan panjang sedang untuk mengilustrasikan perbedaan terjemahan. |

| Arabic (59) | هذه جملة نموذجية متوسطة الطول لتوضيح الاختلافات في الترجمة. |

Each language also has a different reading rate (characters per minute) which influences the number of characters that can be displayed at a time and how quickly subtitles can change.

- Readability: The subtitles should be easy to read. This should work for public displays like TVs and projectors where the audience can be seated at a distance.

- Synchronicity: The subtitles should be mostly* in sync between languages. We don’t want one language lagging too far behind the pace of the speaker and other language translations.

We also have several options to consider in how we present subtitles to the audience: Multilingual displays that show several languages on the same screen and multiple displays/individual devices which show one language per screen. When you show one language per screen, they can obviously update independently, so this article focuses on the problem of synchronizing the display of different language subtitles on the same public screen.

Multilingual Display

Up to four languages on a single display. These four could be shown in a vertical, horizontal, or square layout.

Multiple Displays

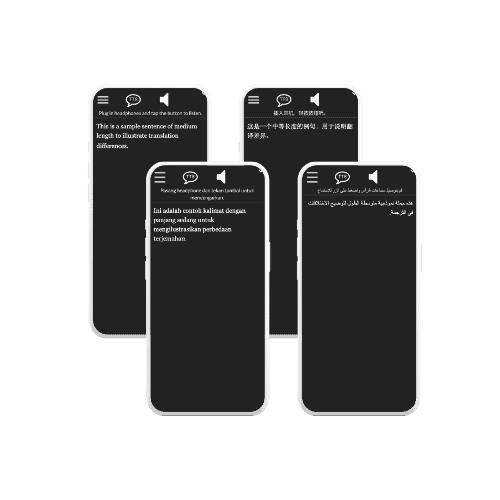

Individual Devices

Levers to Play With

So what can we tweak to show multilingual subtitles in sync with one another?

- Font size, letter spacing

- Choice of languages shown on a single screen

- Translation Length

- Overflow behavior (complicated)

Let’s explore each of them one by one:

Font size adjusts how many characters can fit on the screen at a time. If the size is too small, it will be hard for the audience to read from a distance. If it is too large, overcrowding and choppy text can also make it hard to read. Adjusting letter spacing can make letters appear closer together without shrinking the font size.

Choice of languages lets us select languages that are more similar in character length on the same screen. For example English and Russian translations may be similar enough in length to show on the same display without significantly getting out of sync. We can also choose to display fewer languages (e.g. two) on a multilingual display to give more room for different length translations to be shown.

Translation length is an exciting new possibility with the advent of Large Language Models like ChatGPT. By giving instructions to an AI to generate a translation of a specific length, we can make all translations similar in length to the source sentence. This “magical approach” is experimental–more research needs to be done as to the quality of the length constrained translations.

Overflow Behavior

After tweaking the above parameters, we can still run into situations where we cannot make all four language subtitles fit into a single display. In these overflow situations we can:

- Truncate/hide parts of translations that are too long.

- Dynamically adjust the font size, letter spacing, and translation length to try to get a fit.

- Chunk up the translations into shorter bits and release them over time.

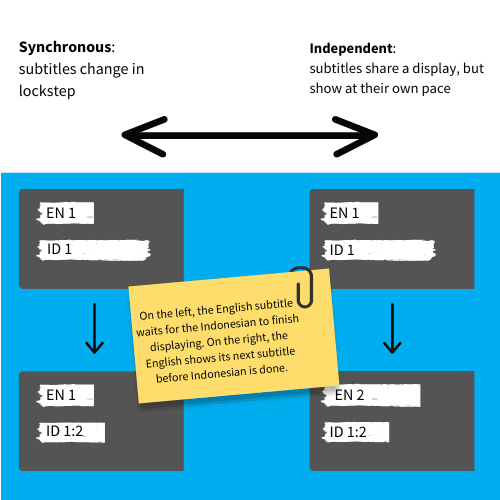

The first two options attempt to keep subtitles “synchronous”. The third option lets us decide what kind of “syncing” behavior we want for “sibling translations” when one is overflowing. How will other subtitles behave in response to the overflowing one?

To make this more concrete, consider the English source and Indonesian translation in the first example of this article. Let’s assume a display can show up to 42 characters for each subtitle. Then the texts would be roughly chunked up into:

| Subtitle Chunk 1 | Subtitle Chunk 2 | Subtitle Chunk 3 |

|---|---|---|

| This is a sample sentence of medium length | to illustrate translation differences. | |

| Ini adalah contoh kalimat dengan panjang | sedang untuk mengilustrasikan perbedaan | terjemahan. |

What should happen after “Subtitle Chunk 2” has been displayed for English?

The English channel could:

- Pause: Wait and statically show Chunk 2 until Chunk 3 of the Indonesian is finished being displayed.

- Blank: Disappear until the Indonesian is finished being displayed. If a new English subtitle comes in either:

- Show it immediately (the English subtitle acts independently)

- Or pause until the Indonesian subtitle chunks are done being shown.

- Equalize: Adjust the English content’s chunking by spreading it out across 3 subtitle chunks.

This brings us to the spectrum of synchronicity:

We can either treat the multilingual display as four independent displays sharing a screen, or we can keep the subtitles in sync by waiting until all are done displaying.

The first approach can end up being too slow to keep up with whoever is speaking or it may drop parts of long translation length languages. The second approach can end up being too chaotic to read because so many languages are changing at the same time on a single display.

For Spf.io’s multilingual projector view, we experimented with these different options and settled on the following solution:

Recommended Solution

We keep translations synchronous, but instead of waiting for the last subtitle to complete, we keep pace with the speaker and immediately show the next subtitle for all languages even if some of them have not displayed all their chunks.

The 11-second animation to the right illustrates what this looks like with our sample sentence.

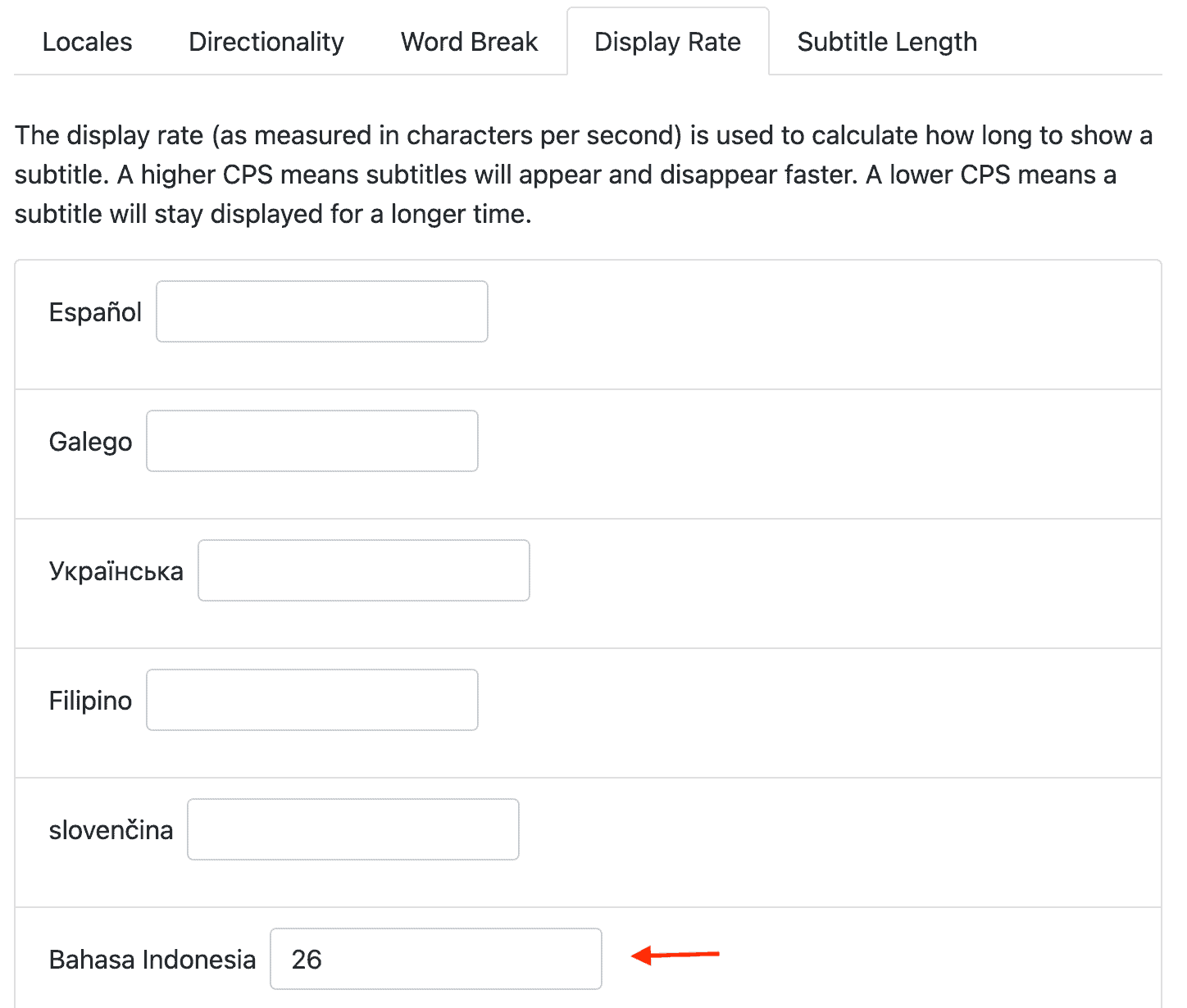

To compensate for the risk of not showing parts of some long translations, we enable users to adjust the display rate and subtitle length for each language so they can tune it for their content and pace of speaking.

If a language is showing subtitles too slowly to keep up, you can increase the characters per second to speed it up. You can also change the subtitle length so that it shows more characters per chunk. Using this in combination with font sizing can make longer language subtitles fit without being hard to read.

Try out your own ideas

Spf.io provides an experimental framework for you to discover the best way to show multilingual subtitles for your event. If you only need two languages, perhaps a single lower third subtitle on two different public displays will suffice. If you need four languages perhaps showing them horizontally laid out on a single screen will work.

And even if you need to display 20 languages that vary widely in length, spf.io provides you with the tools to take a holistic approach to creating readable, synchronous multilingual subtitles. You could for example:

- Put four languages on one public screen

- Tune font sizes, characters per second and subtitle length so that four languages can be comfortably read on one display.

- When you have a prepared manuscript you can translate it automatically in advance and use spf.io’s AI tools to generate paraphrased translations for subtitles that are too long.

- Distribute tablets for people who need one of the other languages not on public display.

- Share a link and QR code so people can access translation on their smartphones.

So what's the best way to show multilingual subtitles?

The answer is…it depends!

Give your audience the best possible experience by trying out the different approaches we described above. When you find the right solution for you, lock it in place with spf.io’s tools so you can reproduce that experience every single time. Give people access to your event in any language with readable, synchronous multilingual subtitles.